GSA SER Verified Lists Vs Scraping

Choosing the right source of targets is often what separates a high-performing GSA Search Engine Ranker campaign from one that wastes resources and attracts penalties. Two primary methods dominate the discussion: buying pre‑verified lists and scraping your own targets on the fly. Both have a place in a serious link builder’s toolbox, but they serve very different needs. This article breaks down the GSA SER verified lists vs scraping debate, giving you the insights required to make an informed decision for your specific projects.

What Are GSA SER Verified Lists?

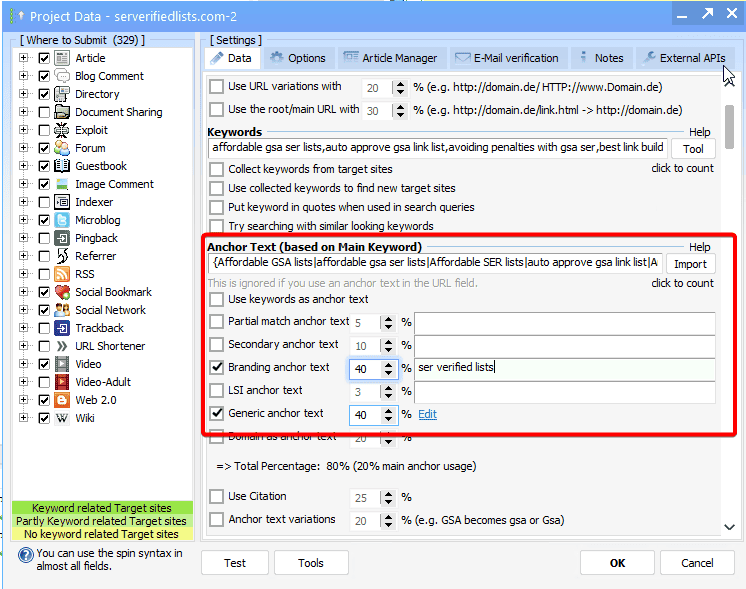

A verified list is a curated collection of URLs that have already been tested for compatibility with GSA SER. Vendors use automated tools to confirm that a target can accept a submission, register an account, or place a link, and they typically categorize the entries by platform, language, PageRank, and other metrics. Instead of your software wasting time on dead sites or blogs with registration disabled, you feed it a ready‑to‑use pool of potential backlinks.

Reputable list sellers update their inventories regularly, removing defunct domains and adding freshly discovered engines. When you load a verified list, you are essentially paying for a shortcut that bypasses most of the trial‑and‑error phase. The files are usually imported as project files or target URL text files directly into GSA SER.

What Does Scraping Mean for GSA SER?

Scraping, in the context of GSA SER, means letting the software harvest its own targets from search engines rather than relying on a pre‑supplied list. GSA SER’s built‑in search engine scraper queries Google, Bing, Yandex, and many other sources using your selected footprints and keywords. It then parses the results, extracts URLs, and attempts to post to whatever it finds – all in real time. You can also use third‑party scraping tools like Scrapebox or GSA’s own Captcha Breaker and Proxy Scraper to gather lists that you later import.

Scraping gives you unlimited and on‑demand access to targets, with no expiration date tied to a vendor’s maintenance cycle. However, the quality of what you scrape depends entirely on your footprint library, proxy quality, and captcha‑solving setup.

GSA SER Verified Lists vs Scraping: Key Differences

The table below might help, but let’s dissect the real‑world differences point by point. Understanding these will clarify when each method pulls ahead in the GSA SER verified lists vs scraping decision.

Quality and Relevance

- Verified lists: Pre‑filtered to include only targets that actually accept registrations, guest posts, or comments. Good sellers also segment by niche-relevant categories, so a health blog list won’t be full of warez forums. This raises the average link quality right out of the gate.

- Scraping: You get a raw, unfiltered stream. Even with perfect footprints, a large percentage of scraped URLs will be dead, no‑follow, already spammed, or completely unrelated to your niche. Manual post‑processing or aggressive tiered filtering is often required to avoid a sky‑high fail rate.

Freshness and Dead Link Rate

- Verified lists: A professionally maintained list usually has a very low dead link rate at the time of purchase. If you buy a list that hasn’t been updated in weeks, though, mortality climbs fast as domains expire and spam filters tighten.

- Scraping: Freshness is as good as your scraping engine. Since you are harvesting from live indexes, you catch sites that just popped up today. However, live scraping also catches temporary servers, parked domains, and sites that block automated posting almost immediately. Dead links are an ongoing battle.

Footprint Risk

- Verified lists: When you share a list with thousands of other users, you leave an identical footprint pattern. If Google de‑indexes a handful of those targets, it can easily extrapolate and penalize entire networks. Scaled link building with the same widely circulated list is a known risk.

- Scraping: Because you pull targets using a variety of unique or semi‑unique footprints combined with fresh proxies, your backlink profile looks far more organic. Scraping drastically reduces the “common denominator†that makes mass link schemes detectable.

read more

Time and Effort

- Verified lists: You import a file and hit start. Almost no maintenance is required beyond setting up the project. This is perfect for beginners or people who want to outsource the technical heavy lifting.

- Scraping: You need a deep footprint collection, rotating proxies, reliable captcha solving, and the patience to let GSA SER search for hours before the first successful links appear. The learning curve is steep, and the daily monitoring is non‑trivial.

Cost Comparison

- Verified lists: A one‑time purchase typically ranges from $10 to $50 for a solid multi‑platform pack. Subscription models can run higher but guarantee ongoing updates. No additional proxy bandwidth is needed to search for targets, which saves money.

- Scraping: The scraping software itself is often free (built into GSA SER), but you will burn through proxies, captcha credits, and electricity much faster. The hidden cost is your time. In the long run, a well‑tuned scraping rig can be very cost‑effective, but the upfront investment in learning and infrastructure adds up.

When to Choose Verified Lists Over Scraping

Opt for verified lists if you are running tier‑2 or tier‑3 campaigns that simply need a high volume of no‑brainer links, or if you are new to GSA SER and want to see quick success without frustration. They also work well when you’re targeting rare platforms (like specific article directories or wiki engines) that take forever to find through random scraping. If your campaign involves a limited‑time promotion where speed matters more than absolute uniqueness, a freshly updated verified list will get you indexed faster.

On the other hand, dedicated scraping wins hands‑down for tier‑1 money site campaigns where footprint diversity is critical. It’s also the smart choice for experienced users who can build a well‑filtered, private list over time. If you are operating in very competitive niches where any detectable pattern leads to rapid de‑indexation, you simply cannot afford to share targets with a public list.

FAQ: GSA SER Verified Lists vs Scraping

1. Can I combine verified lists and scraping in the same GSA SER project?

Absolutely. One of the most effective strategies is to load a verified list as your primary target and enable background scraping to top it up. This gives you the immediate link velocity from the list while the scraper discovers fresh, unique URLs over time.

2. Are verified lists completely safe for money sites?

They are rarely recommended for a pure money site campaign. The more a list is shared, the higher the chance it’s already flagged. Use them on tiers or as a seeding list that you filter heavily. Never import a generic public verified list directly to a site you can’t afford to lose.

3. How do I know if a scraped list is better than a verified one?

Judge it by the verified success rate in GSA SER. A good scraped list, after removing duplicates and dead links with a tool like GSA Proxy Scraper’s link checker, can match or exceed a mediocre verified list. The advantage lies in its uniqueness, not necessarily its raw posting success rate.

4. Do verified lists work without proxies?

Technically yes, you can post to the list without search proxies, but you still need posting proxies if you want to avoid IP bans on the target sites. Verified lists only eliminate the need for search engine proxies during the harvesting phase.

5. What’s the biggest mistake beginners make in the verified list vs scraping choice?

They treat it as an either‑or decision forever. Smart link builders start with a verified list to learn the platform, then gradually invest in scraping infrastructure as their campaigns scale. The real value is understanding your campaign’s tolerance for shared footprints.

In the eternal GSA SER verified lists vs scraping argument, there is no universal winner. Verified lists trade uniqueness for speed and ease. Scraping trades an upfront time investment for unbeatable footprint diversity. Align the method with the tier, the niche conflict risk, and the amount of control you truly need. Often, a hybrid approach is where the sustainable rankings hide.